Umut Ozyurt

Computer Vision & Deep Learning Researcher

My research focuses on generative vision, 3D geometry, and vision-language models to reason about the physical world. I am an undergraduate researcher at Middle East Technical University (METU), ranked #1 in Turkey for Computer Science (QS, THE) and Computer Vision (CSRankings).

Past Experience Summary:

- VLM 3D spatial reasoning with collision-aware VQA obtained from scene meshes (INSAIT, ETH Zürich, paper in progress)

- Human-centric perception for robotics and uncertainty estimation, resulting in an ICRA '25 publication (University of Cambridge)

- Controlled and identity-preserving face generation/editing via diffusion models (METU ImageLab & Syntonym, paper in submission)

- Zero-shot face recognition via attribute descriptions without anchor images (Infodif)

- Real-time thermal and RGB human detection for edge UAV platforms, yielding a IEEE IISEC'23 Oral Presentation paper. (METU Intelligent Systems Lab & Asisguard)

I complement my research with nearly 3 years of professional experience. This background allows me to implement complex model architectures from scratch, manage full training pipelines, and conduct large-scale experiments with ease. I aim to develop controllable and data-efficient multimodal systems reliable enough for real-world interaction.

Middle East Technical University (METU)

B.Sc. in Computer Science (Senior Year)

CGPA: 3.86 / 4.00 (Rank: 10/288)

Academic

Research Affiliations

University of Cambridge

University of Cambridge

Middle East Technical University

Middle East Technical University(METU)

Selected Publications

Research contributions in computer vision and deep learning

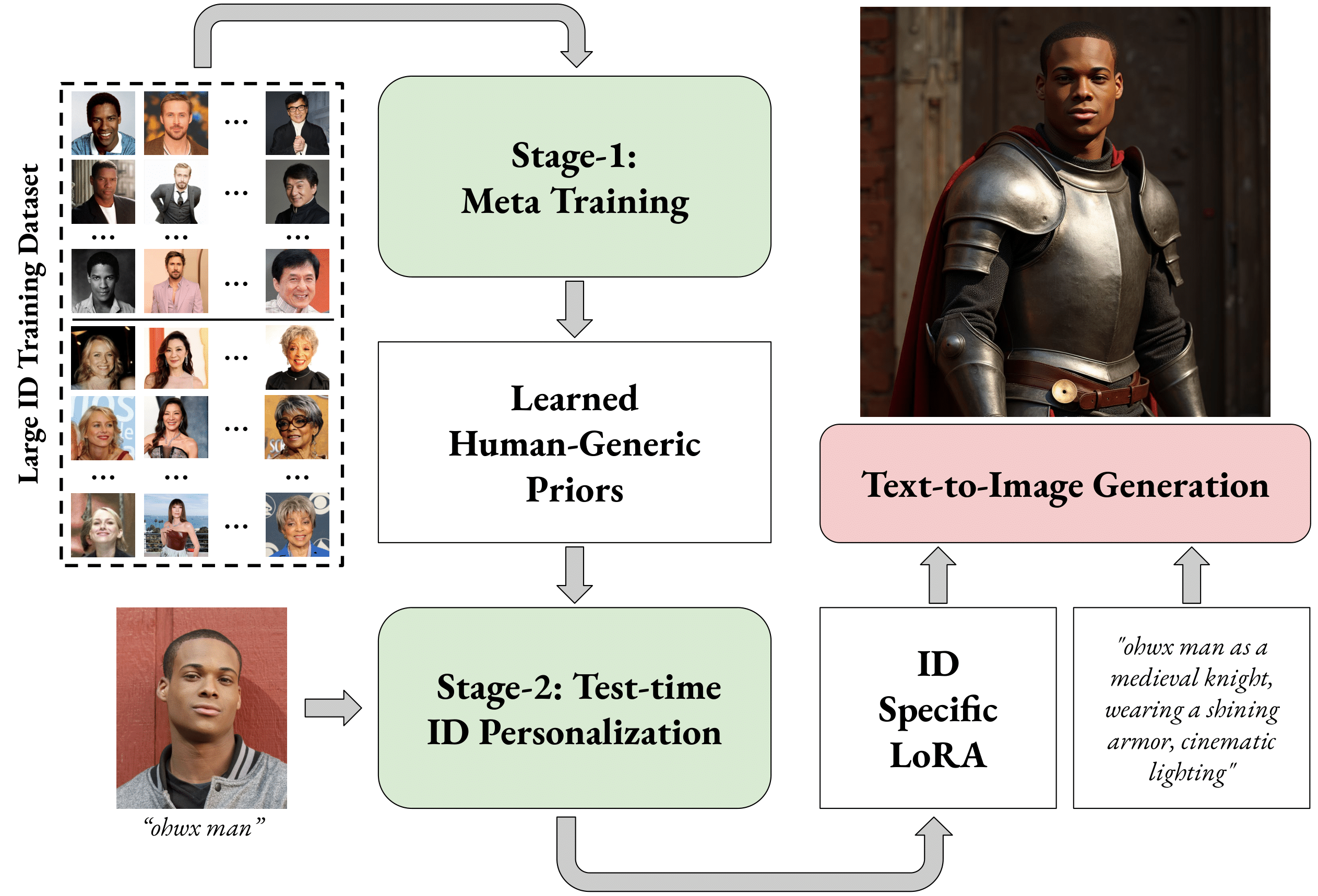

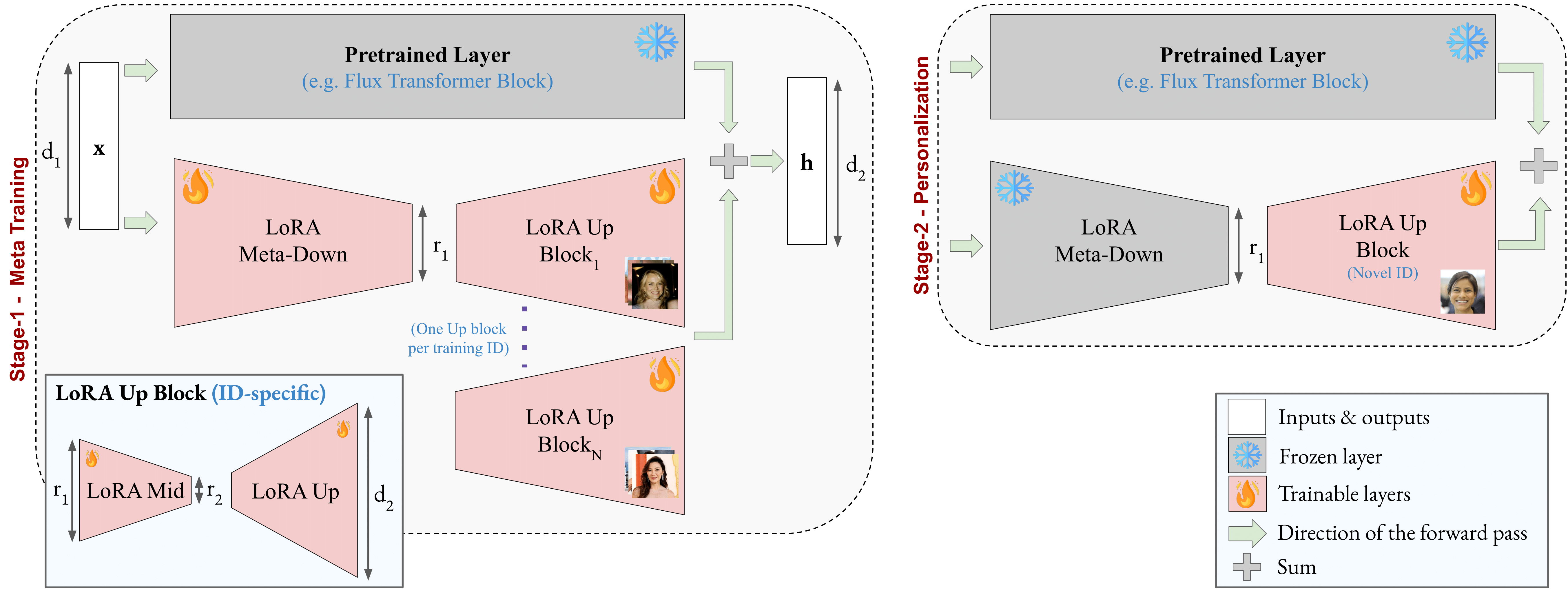

Meta-LoRA: Meta-Learning LoRA Components for Domain-Aware ID Personalization

A novel approach using meta-learning for Low-Rank Adaptation (LoRA) components in diffusion models, enhancing identity preservation in text-to-image generation.

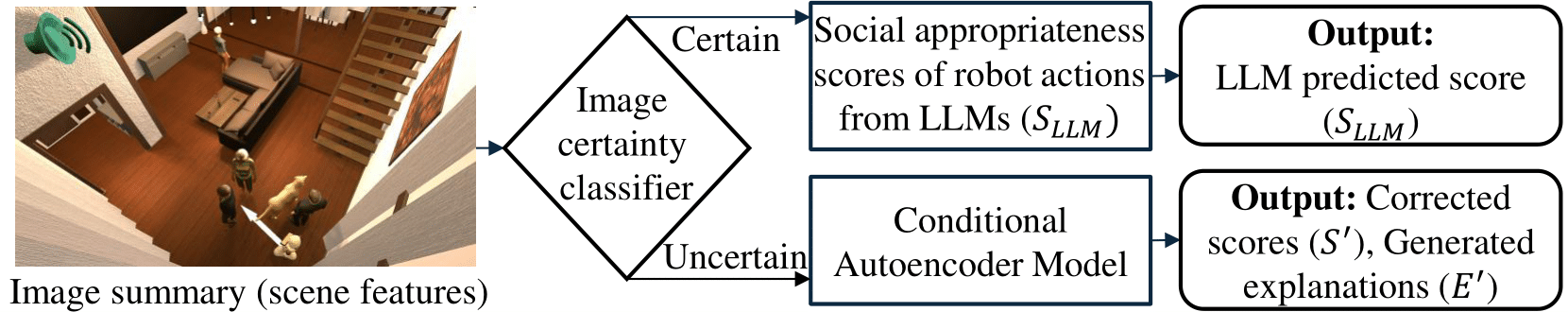

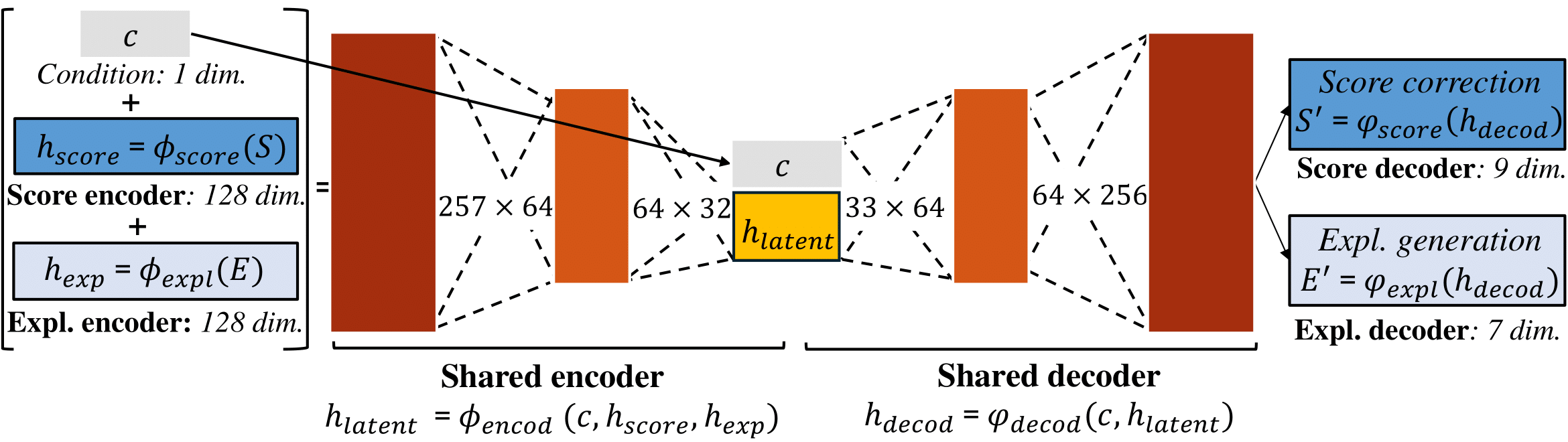

GRACE: Generating Socially Appropriate Robot Actions Leveraging LLMs and Human Explanations

A framework generating contextually appropriate robot behaviors by combining large language models with human social explanations for improved human-robot interaction.

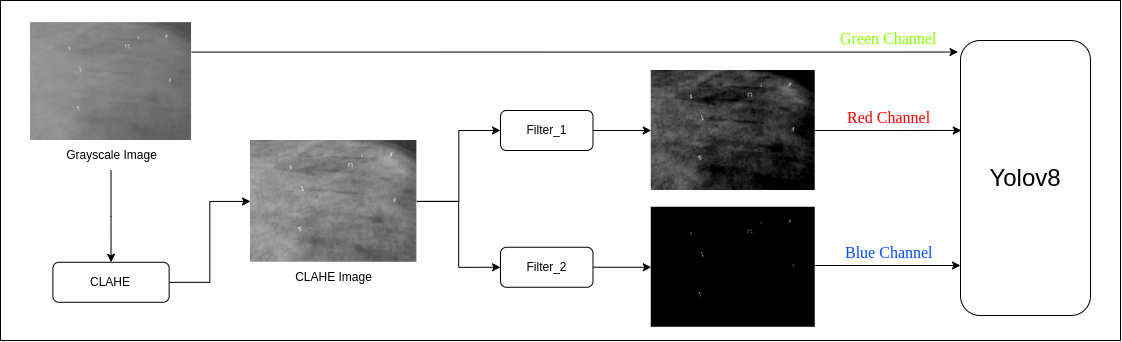

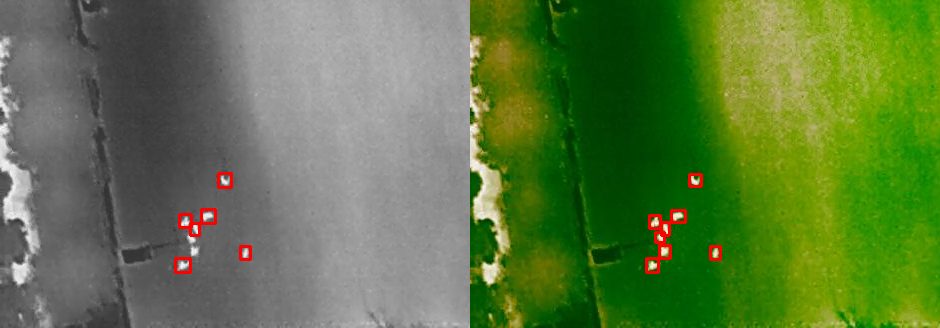

Enhanced Thermal Human Detection with Fast Filtering for UAV Images

An approach optimizing thermal human detection on UAV platforms using efficient filtering techniques for real-time performance on edge devices.

Peer Review & Academic Service

Experience

Research and engineering experience

Research Experience

INSAIT (Institute for Computer Science, AI and Technology)

Summer Undergraduate Research Fellow (SURF)

06/2025 - Present

Mentors: Dr. Danda Pani Paudel, Dr. Jan-Nico Zaech.

Working on enhancing vision-language models by creating new tasks that better probe and improve 3D perception. Part of the prestigious SURF program (selected among 4000+ applicants with a ≤0.25% acceptance rate) at this ETH Zürich/EPFL-founded institute supported by Google, AWS, and DeepMind.

faculty

faculty

METU ImageLab

Undergraduate Researcher

09/2024 - 06/2025

Advisor: Assoc. Prof. R. Gökberk Cinbiş.

Worked on personalized text-to-image generation with LoRA fine-tuning of Stable Diffusion models. Owned the experimental pipeline, reimplemented and tuned state-of-the-art baselines, proposed an identity resemblance metric, and ran ablations that shaped the final method and evaluation.

University of Cambridge (AFAR Lab)

Undergraduate Researcher

07/2024 - 09/2024

Advisor: Prof. Hatice Güneş.

Worked on human-centered robot perception and social appropriateness for robot actions. Designed the full data and evaluation pipeline on human judgments, predicted uncertainty in social appropriateness with a diverse set of models, and experimented with heteroscedastic losses. As second author on GRACE, constructed the human agreement classification component, helped define the task, specified baselines, and contributed at each step of the ICRA 2025 manuscript.

METU Intelligent Systems Lab

Undergraduate Researcher

07/2023 - 07/2024

Advisor: Assoc. Prof. Seyda Ertekin.

Worked on thermal imaging-based human detection for rescue operations on UAVs, focusing on real-time processing on edge devices (NVIDIA Jetson). First author on the IISEC 2023 publication, where I designed the fast filtering module, trained models, and conducted all experiments.

Professional Experience

Syntonym

Generative Computer Vision Researcher (Remote)

09/2024 - 06/2025

Researched image and video editing with diffusion models, focusing on controllable generation. Built production-ready pipelines for image and video face swapping.

Infodif

Computer Vision Engineer / Researcher

01/2024 - 07/2024

Developed and optimized a face recognition pipeline for the Turkish National Police. Designed multi-attribute recognition models that match suspects using only textual facial descriptions, with a mathematical scoring system robust to missing or ambiguous attributes.

AsisGuard

Candidate Computer Vision Engineer / Researcher

03/2023 - 12/2023

Led object detection and tracking projects for both thermal and RGB streams in edge surveillance products. Owned the full pipeline from dataset curation and model training to edge optimization on custom AI chips and NVIDIA Jetson Orin, while coordinating a team of interns.

Achievements

Recognitions of academic and research excellence

INSAIT SURF 2025

Selected for a prestigious 3-month Summer Undergraduate Research Fellowship at INSAIT, working with ETH Zürich-affiliated faculty.

4000+ applicants from 150+ countries

UIUC Rehg Lab Research Offer

Mentored by, and offered a summer research role to work with Ozgur Kara at the University of Illinois Urbana-Champaign.

ICVSS 2025 Acceptance

Accepted to the 19th International Computer Vision Summer School (34% acceptance rate among 521 primarily MSc and PhD applicants). Declined due to INSAIT commitment.

Best Presentation & Top Paper. Guided Research Symposium

Awarded for best presentation, and our paper was recognized as the overall best work (out of 27 papers) with a mean judge score of 13.8/15.

Top Project. Deep Generative Models

Recognized for the most complex and successful term project in the graduate "Deep Generative Models" course. Reimplemented a CVPR 2023 style transfer paper from scratch, resolving missing details and releasing the only public implementation .

Erasmus+ Traineeship Grant

Awarded funding support for a visiting research position at the University of Cambridge.

METU Merit Scholarship

Awarded a housing and dining scholarship for ranking in the top 1000, top 0.04%, among 2.5M+ applicants in the 2020 Turkish National University Entrance Exam.

High Honor Student

Recognized for outstanding academic excellence across 8 consecutive semesters.

Get In Touch

Open to research collaborations in computer vision and deep learning

Location

Ankara, Turkey

Academic References

Hobbies & Interests

Creative pursuits and recreational activities beyond academia

Music

As a passionate self-taught pianist, I also play violin and viola. I compose original pieces across diverse genres, using music as a creative outlet for emotional expression.

Physical Activities

I stay active through swimming for endurance and meditation, and play amateur tennis to challenge my reflexes and strategy.

Others

I enjoy strategic challenges like chess, particularly in blitz and rapid formats. I am also a spectator and near-professional player of cue sports, focusing on three-cushion billiards and snooker.